A feel-good ‘lost dog’ Super Bowl commercial reveals how comfortable Ring has become implying access to private video feeds.

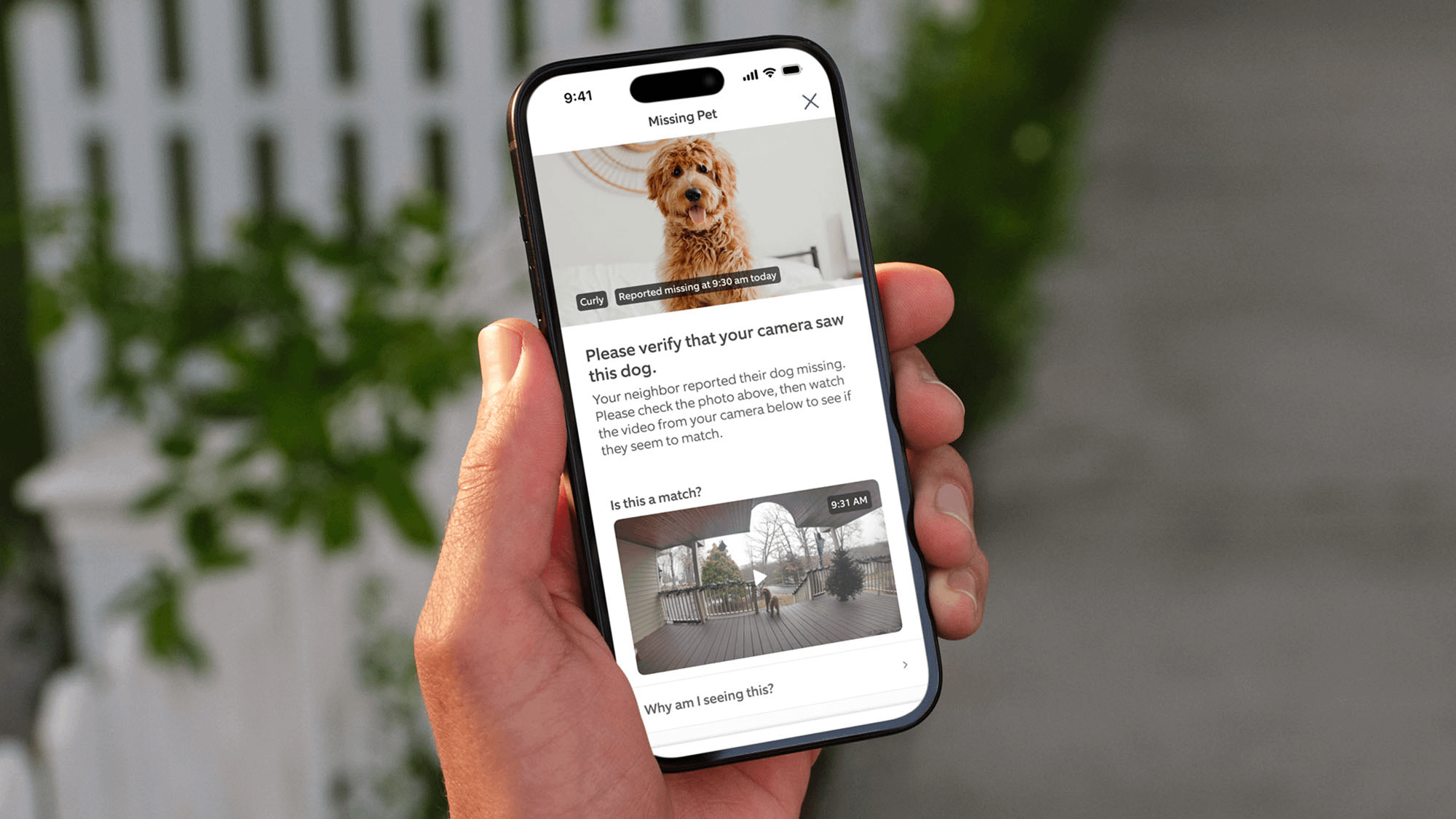

Ring just ran this feel-good commercial about reuniting lost dogs with their owners during the super bowl this year. You've probably seen it, or something like it. A worried family. A missing pet. Neighbors checking their Ring footage. Everyone coming together. The dog gets found. Tears of joy. Real heartwarming stuff. Exactly the kind of story Silicon Valley loves to tell about itself—technology bringing communities together, making the world a little bit better, one doorbell camera at a time.

Except there's a problem. Actually, there's a massive problem that the entire ad glosses over with a layer of emotional manipulation so thick you could spread it on toast.

How does Ring know what's happening at my front door?

Because that's what the ad implies. Not subtly. Not theoretically. The whole narrative depends on it. Ring—owned by Amazon, let's not forget—is positioning itself as an active participant in identifying, tracking, and surfacing events captured by private doorbell cameras. They're not just providing the hardware anymore. They're suggesting they can see what's on it, analyze it, and use it for purposes beyond what you explicitly intended when you installed the thing. And once you say it out loud like that, once you put it in a Super Bowl commercial or a YouTube pre-roll, you can't take it back.

This Isn't About Paranoia

I'm not saying Amazon employees are sitting in some dystopian control room watching your porch in real time, eating popcorn while you bring in the groceries. That's the lazy rebuttal people reach for when you bring this stuff up, and it completely misses the point.

The issue isn't how Ring does it. The issue isn't whether there's a human being actively watching or whether it's all automated through machine learning models that can identify dogs, cars, packages, and people with terrifying accuracy. The issue is that Ring felt comfortable implying access at all. That they built an entire marketing campaign around the premise that footage from your private security camera could somehow be leveraged to solve community problems.

I am calling it out now.

A doorbell camera is sold as a private security device. You buy it to see who's at your door. To catch porch pirates. To have evidence if something happens. It's supposed to serve the homeowner—nobody else. It's your camera, your footage, your property. The second a company starts framing that footage as something useful for broader "community" purposes, as a resource that can be tapped into when needed, the boundary's already been crossed. You don't get to quietly reclassify private surveillance as a public resource and expect nobody to notice. You don't get to turn my security camera into your neighborhood watch program without asking.

The Slippery Part Isn't the Tech—It's the Normalization

Ring will say this is automated. Or anonymized. Or opt-in. Or AI-powered. Or protected by ironclad privacy policies reviewed by teams of lawyers who went to very expensive schools. Fine. Maybe all of that's true. Maybe every technical detail checks out. Maybe they've got frameworks and protocols and oversight committees.

But here's the uncomfortable reality that tech companies never want to acknowledge: normalization always comes before expansion. Always. You start with something innocuous, something nobody could possibly object to. You get people used to the idea. You make it feel normal, even good. Then you expand the scope. Then you expand it again. And again. And by the time anyone thinks to push back, the infrastructure is already built, the precedent is already set, and you're the paranoid weirdo standing in the way of progress.

Today it's a lost dog. Who could object to finding a lost dog? Tomorrow it's "suspicious activity" in the neighborhood. Then it's "public safety insights" shared with local municipalities. Then it's formal law enforcement partnerships where cops can request footage without a warrant. Then it's behavior modeling and pattern recognition and predictive analytics about who comes and goes and when.

Oh wait—we've already done that last one. Ring already has partnerships with hundreds of police departments across the country. This isn't speculation. This isn't a slippery slope fallacy. This is documented reality. The slope has already been slipped down. We're just arguing about how far.

Amazon Changes Everything

And look, this isn't some scrappy startup with good intentions and bad foresight. This isn't a couple of Stanford dropouts who didn't think through the implications of their brilliant idea. This is Amazon. Let that sink in for a second.

A company whose entire business model is data aggregation at planetary scale. A company that doesn't just collect information—it connects it across platforms, across devices, across every interaction you have with their ecosystem. They know what you buy. They know what you watch. They know what you ask Alexa. They know what's in your shopping cart, what's on your wish list, what you searched for but didn't buy. They have your purchasing patterns, your location data, your voice recordings, your viewing habits, your reading preferences. They build models of you so accurate it's genuinely unsettling.

When Amazon owns the camera on your front door, context matters. When the same company that knows you bought dog food, anxiety medication, and a book about divorce also has a camera pointed at everyone who enters and leaves your house, the math changes entirely. The potential for correlation, for inference, for building comprehensive profiles of people's lives, becomes almost unlimited.

And when that same company starts running commercials suggesting they can help find things using footage from those cameras, people are absolutely right to ask: Who's looking? Who decides what's worth looking for? Under what circumstances does my private footage become available? What's the process? What are the safeguards? What happens to that data afterward? Where does it go? How long is it kept? Who else gets access? And what happens next, after we've all accepted this as normal?

This Is How Privacy Actually Dies

Not through lawsuits. Not through congressional hearings. Not through dramatic data breaches that make the front page of the New York Times. Those things matter, but they're not how privacy actually erodes in practice.

Privacy dies through friendly commercials and soft language and emotional manipulation. Through ads specifically designed to make you feel bad for questioning them. Through narratives that position surveillance as community, monitoring as safety, data collection as service. It dies through the slow accumulation of small concessions, each one justified by convenience or security or helping find a lost dog.

"Why wouldn't you want to help? Don't you care about your neighbors? Don't you want to live in a safe community? What are you trying to hide?"

Because I didn't buy a neighborhood surveillance device. I bought a doorbell. I bought a tool to serve my needs, not to participate in a distributed monitoring network. I didn't sign up to be a node in Amazon's data collection infrastructure. I didn't agree to let my front porch become a camera in someone else's security apparatus, even if that someone is my own HOA or police department.

Final Thought

Ring didn't reveal some shocking new capability in this commercial. The technology has been there for years. The partnerships have been in place. The data has been flowing. None of that is news.

What it revealed was something more important: how comfortable the company has become reframing your private space as something it can leverage. How confident it is that you'll accept this framing. How certain it is that the emotional appeal—think of the dogs! think of the children! think of the community!—will overwhelm any privacy concerns you might have.

And once a company starts talking that way in public, in advertisements designed to reach millions of people, the internal conversation is already much further along. They've already done the legal review. They've already built the infrastructure. They've already mapped out the next five moves. Public messaging is always the last step, not the first. By the time you see the commercial, the decisions have already been made.

Question it now. Push back now. Demand answers now. Because later, once it's normalized, once it's just how things work, you won't be asked. You'll be told. And your doorbell will already be watching.

My advice? Trash your Ring Doorbell NOW.

For advertising please contact the editor at [email protected]

Privacy Policy

Terms and Conditions - Affiliate Policy

Home Security

© Copyright 2008-2026.

11816 Inwood Rd #1211, Dallas, TX 75244